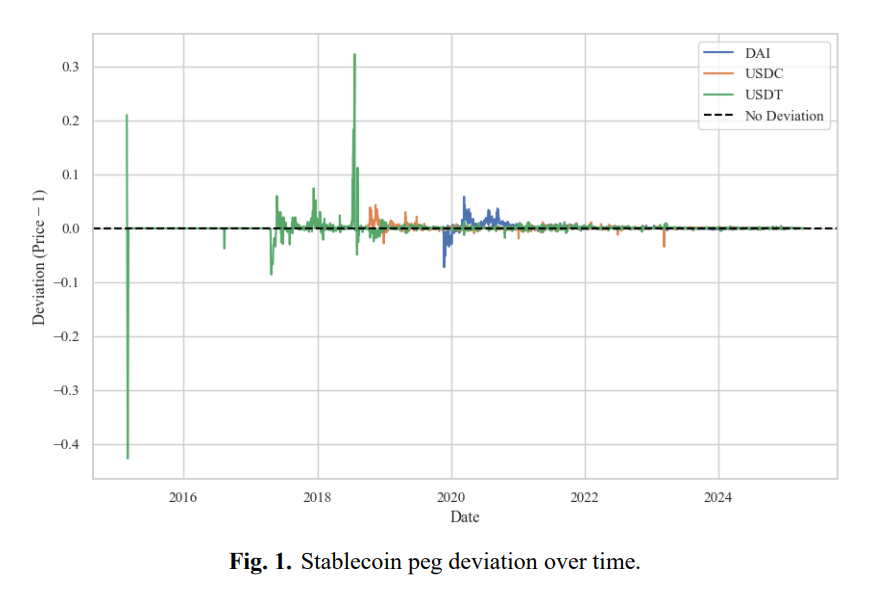

In October 2018, USDT, the largest stablecoin by volume, traded at approximately $0.87 on major exchanges. USDC, in its early period, regularly traded above $1. Neither outcome was a crisis. Both were normal for what the market was at the time: few participants, thin liquidity, slow arbitrage, fragmented trading across exchanges with no efficient way to close price gaps.

By 2019–2023, according to BIS data, stablecoins traded within a tight band of $1 approximately 94% of the time. Deviations became smaller. Recovery became faster.

The tokens themselves had not changed in any fundamental way. What changed was the infrastructure built around them, who could access redemption, how many participants were willing to arbitrage, and whether the market trusted that a $1 redemption was real.

That is the argument this piece makes: a stablecoin peg is a market outcome. And market outcomes are built.

Key Takeaways

- In October 2018, USDT fell to approximately $0.87. By 2019–2023, stablecoins traded near $1 around 94% of the time. The token didn't change. The market around it did.

- Peg stability comes from three layers: primary market access, secondary market arbitrage, and participant trust, and it requires all three simultaneously.

- USDC maintained an average secondary-market discount of ~1 basis point. USDT's was ~55. The gap traces to one variable: USDC had 500+ monthly arbitrageurs; USDT had roughly 6.

- Reserves don't hold the peg. They create the conditions under which the market can hold it if redemption access is fast, broad, and predictable.

- Trust is structural. It determines whether participants arbitrage or step aside. Tether's elimination of commercial paper in 2022 shifted that calculation without changing the token's design.

How the peg forms: three layers

Peg stability is the result of three layers working simultaneously. Remove any one and the other two cannot compensate.

- The first is the primary market, the direct channel between issuer and authorized participants for minting and redeeming at $1. It sets the economic anchor.

- The second is the secondary market: exchanges, DEXs, OTC desks, where the actual price forms and where arbitrageurs act on the primary market anchor to pull it back toward $1.

- The third is the trust layer, the set of beliefs participants hold about reserve quality, redemption speed, and issuer reliability. It determines whether participants choose to arbitrage at all.

None of these layers works in isolation. A well-designed primary market with no arbitrageurs produces deviations that persist. A deep secondary market built on fragile trust collapses the moment that trust does. The peg holds when all three are functioning at once.

Primary market as the anchor

The primary market is where the economic logic of a stablecoin begins. Authorized participants can mint or redeem tokens directly with the issuer at $1. If the market price falls below that, someone buys at a discount and redeems for a dollar. The spread is the profit. In theory, this alone should keep the peg tight.

What the theory skips is access. Primary market access is gated. Minimum transaction sizes, onboarding requirements, and settlement timelines that can run from hours to days mean that the theoretical arbitrage mechanism is only available to a limited group.

Federal Reserve Board research confirms the consequence: even with fully backed reserves, restricted or slow redemption directly reduces arbitrage efficiency. Deviations persist longer because fewer participants can act on them.

The primary market creates conditions under which the market can stabilize the peg. The distinction matters for issuers: a redemption mechanism that works on paper but is inaccessible at speed or scale is a design feature that the market cannot use.

Secondary market: where the peg actually holds

If the primary market sets the anchor, the secondary market is where the rope either holds or snaps. Price formation happens on exchanges and DEXs. When a stablecoin trades below $1, arbitrageurs buy it there and redeem with the issuer. The gap closes and the peg recovers.

How quickly that happens and how deep the deviation goes before it does depends on who is willing to act.

The USDT and USDC comparison from the 2021–2022 period makes this concrete. USDT averaged a secondary-market discount of around 55 basis points. USDC averaged approximately 1 basis point.

The difference in reserve quality or redemption mechanics between the two was not large enough to explain a 54-basis-point gap. The explanation sits elsewhere: USDT had roughly 6 active monthly arbitrageurs. USDC had more than 500.

More participants means smaller deviations, faster recovery, and less exposure to any single actor's willingness to trade on a given day. That is the structural finding and it points directly to what issuers need to build.

There is a second-order consequence worth naming. Research from the University of Chicago shows that more efficient arbitrage reduces deviations in normal market conditions but increases sensitivity to mass redemption events. Tighter arbitrage makes the system faster in both directions. Issuers should plan for that.

Breadth of listings compounds the participant depth dynamic. A stablecoin present across more venues and integrated into more use cases has more distributed liquidity. Localized imbalances, a spike in selling pressure on one exchange, get absorbed rather than amplified.

Trust layer: the variable that governs everything else

Reserve quality and transparency do not hold the peg mechanically. They determine whether market participants believe the peg is worth holding.

Tether's elimination of commercial paper from its reserves in 2022 illustrates this. The operational structure of the token did not change. What changed was the risk calculation for arbitrageurs and institutional participants who had been uncertain whether redemption would work as advertised under pressure. That shift in confidence had measurable market effects.

The BIS frames the underlying dynamic precisely: transparency's stabilizing effect is conditional on the starting level of trust. When trust is high, more disclosure stabilizes. When trust is fragile, the same disclosure can accelerate the reaction it was meant to prevent.

For issuers, this means the trust layer is a structural one. Participants will arbitrage only when they hold three beliefs at once: the redemption mechanism works, the token is genuinely worth $1, and the reserves can be liquidated fast enough to make that true under stress. All three must hold. A gap in any one is enough to produce hesitation and hesitation at the wrong moment is a depegging event.

What issuers must build

The USDT and USDC history produces a clear conclusion: peg stability is not designed in at launch. It is built over time through deliberate infrastructure decisions. Four of those decisions matter most.

- Distribute liquidity at the right market points. Liquidity needs to exist in DEX pools with direct arbitrage relevance: stablecoin/stablecoin pairs, direct USDC and USDT pairs and across CEX order books with key trading pairs. Incentive programs should be dynamic: liquidity rebates and market-maker programs need to intensify when deviations widen.

- Build institutional arbitrage at scale. Market makers, proprietary trading firms, and crypto-native funds with primary market access need to be onboarded with operational terms that go beyond access. KPIs should cover spread commitments, depth requirements, and minimum activity levels. The goal is to make arbitrage constant rather than episodic.

- Expand the participant base. The single biggest predictor of peg tightness is participant count. Concentration in a small number of institutional actors creates fragility, if those actors step back, there is no one to replace them. Reducing minimum thresholds, simplifying onboarding, and lowering barriers for smaller participants directly addresses this.

- Build stress-period protocols before they are needed. A system that monitors peg deviation, liquidity depth, and recovery speed with pre-defined responses at each threshold is not optional infrastructure. The time between a depegging event starting and confidence beginning to unwind is short. Issuers who have to design their response in real time will lose it.

Conclusion

The history of stablecoin pegs is a story about market infrastructure catching up to a promise.

USDT made that promise in 2014. The market took years to develop the depth, the participants, and the operational maturity to keep it reliably. USDC built tighter conditions around the same promise faster, which is why its peg deviation was 54 basis points smaller despite operating in the same market.

The lesson is direct: the peg you announce at launch is not the peg you will have. What you will have is determined by how many participants can arbitrage, how quickly they can access the primary market, and whether they trust that the dollar on the other side of the trade is real. None of those conditions are set at issuance. All of them are built.

Frequently Asked Questions

Why do stablecoin pegs break?

Stablecoin pegs break when arbitrage fails. That can happen because participants lack access to the primary market (mint/redeem), because liquidity on secondary markets is too thin to absorb selling pressure, or because trust in the issuer collapses and participants choose not to arbitrage even when the economics support it. All three layers need to function simultaneously. A failure in any one is enough to produce a depegging event.

What is the difference between the primary and secondary market for stablecoins?

The primary market is the direct channel between the issuer and authorized participants who can mint or redeem tokens at exactly $1. The secondary market is everywhere else: exchanges, DEXs, OTC desks, where price floats freely and must be pulled back toward $1 by arbitrageurs acting on the primary market anchor. The primary market sets the economic incentive. The secondary market determines whether enough participants can act on it fast enough to matter.

Why does USDC have a tighter peg than USDT?

Research from 2021–2022 shows USDC had more than 500 active arbitrageurs per month. USDT had roughly 6. That difference in participant depth explains most of the gap between USDC's ~1 basis point average secondary-market discount and USDT's ~55 basis points. More participants means faster correction and less dependence on any single actor's willingness to trade on a given day.

Do high-quality reserves guarantee stablecoin peg stability?

No. Reserves are a precondition. Even fully collateralized stablecoins depeg when redemption access is slow or restricted, when too few participants can act as arbitrageurs, or when market confidence in the issuer breaks down. Reserve quality affects trust, trust affects participation, and participation is what holds the peg. The chain has multiple links and needs all of them.

What should a stablecoin issuer prioritize to maintain peg stability?

Four things: broad liquidity distribution across CEXs and DEXs with dynamic incentive programs that respond to deviation; institutional arbitrage partners with defined KPIs covering spread, depth, and activity minimums; low barriers to entry for smaller arbitrageurs to prevent concentration risk; and a real-time monitoring system with pre-defined stress responses. Participant count is the strongest predictor of peg tightness, everything else serves that goal.

.png)

Keeping the Peg: How Stablecoin Stability Actually Works

Stablecoin pegs are built over time through market infrastructure, arbitrage depth, and trust. Explore how USDT and USDC achieved peg stability, and what it means for new issuers.Read more

Nobody out-resourced OpenAI, Google, or Amazon. These three AI startups found a different way in and each one used a move the giant in front of them couldn't copy without hurting itself.

Key Takeaways

- Structural gaps beat feature gaps. Mistral, Perplexity, and ElevenLabs found one dimension the giant couldn't compete on without damaging their core business: data sovereignty for Mistral, answer-first search for Perplexity, developer-first voice AI for ElevenLabs.

- The best positioning is the kind the competitor can't copy. OpenAI can't go fully open source without unraveling its monetization model. Google can't switch to direct answers without removing ad inventory. Amazon can't make voice AI its primary focus without deprioritizing its cloud business.

- Revenue efficiency matters more than headline valuation. All three companies scaled fast with small teams. Perplexity's 4.7x ARR growth with ~250 people, Mistral's path to $1B revenue from a standing start in 2023 – these numbers reflect capital discipline.

- The right question before entering a market is "what are the incumbents prevented from doing?" Regulatory constraints, business model conflicts, customer base limitations, any of these can create a gap more durable than anything a startup builds. Finding that gap before building saves years of competing on the wrong axis.

Mistral or How to Win on Positioning

April 2023. Three researchers from DeepMind and Meta registered a company in Paris. One month later, they closed a €105M seed round. The timing is precise: ChatGPT has just redefined the market, European companies are looking for alternatives, and no credible option exists yet.

Mistral's bet was simple on paper and hard to execute. While OpenAI, Anthropic, and Google were racing to build the most powerful closed models, Mistral went open source under Apache 2.0 licenses. Because it was the one move a US-based closed model company couldn't match without unraveling its entire business model.

The strategy worked for a specific reason. European enterprises: banks, governments, defense agencies have hard constraints that American cloud AI can't satisfy. When Mistral says "deploy this on your own servers, the model is yours," that is the real product.

In late 2025, Mistral partnered with SAP and the French and German governments to build a sovereign AI stack for public administrations, ensuring government data is processed using EU-compliant technology. HSBC also chose Mistral as an AI partner for private cloud deployment, giving the bank flexibility, data security, and lower latency compared to cloud alternatives.

ASML, the Dutch semiconductor equipment giant, led a €1.3 billion Series C and took an 11% stake. Total funding since 2023 has crossed €2.8 billion. CEO Arthur Mensch said at Davos in January 2026 the company is on track to exceed $1 billion in revenue by end of year.

The takeaway: Mistral built the only model a specific set of buyers could actually use. The question worth asking before you start competing: is there a segment your main competitor is structurally excluded from? Not unwilling to serve, excluded, by their own business model or regulatory exposure?

Perplexity: Exploit the Problem the Giant Can't Fix

Perplexity's founding pitch took about fifteen seconds to explain. Google gives you links, but we give you answers.

That insight wasn't obvious when the company started in 2022 with four people and a narrow hypothesis: search is broken for people who need to know things, and Google can't fix it without destroying its advertising business. Every link Google shows is a monetization opportunity. Switching to direct answers means removing the unit of ad inventory.

According to AI Funding Tracker, in January 2024, Perplexity was worth around $500 million. By December 2024, investors valued it at $9 billion. By September 2025, the number hit $20 billion – a 40x valuation jump in under two years, from a company with roughly 250 employees and no advertising budget.

Perplexity reached $200 million in annual recurring revenue by October 2025, representing 4.7x growth year-over-year. Growth came almost entirely from product quality and word of mouth.

The distribution strategy evolved intelligently. Samsung launched a Perplexity AI-powered TV app as part of Vision AI Companion, available on all 2025 Samsung TVs, with users receiving a free 12-month Pro subscription. Hardware partnerships replaced the sales team. Every device integration is an acquisition channel without acquisition cost.

The business model decision is worth examining separately. Perplexity tried advertising in 2024 and killed it. Executives concluded user trust is worth more than ad revenue, a direct contrast to how Google is structurally forced to operate. The company bet subscriptions and enterprise contracts over advertising, and the 4.7x revenue growth suggests the bet is working.

The takeaway: find the problem the market leader creates by existing. A structural limit they can't remove without damaging themselves. Then build the solution and wait for the constraint to become visible to everyone.

ElevenLabs: Let Developers Build Your Sales Team

Two Polish engineers, Mati Staniszewski and Piotr Dąbkowski, started ElevenLabs in 2022 because they were frustrated watching poorly-dubbed American movies in Poland. The product they built first was simple: 30 seconds of audio, and a model clones the voice.

They gave it away for free and developers adopted it immediately. The free tier created a distribution network the company never had to build itself: creators embedded ElevenLabs into tools, apps, games, and workflows. By the time enterprise buyers showed up, the product was already everywhere.

The ARR trajectory tells the story directly, ElevenLabs hit $330 million in ARR in 2025, up 175% year-over-year. Enterprise clients include Deutsche Telekom, Revolut, Square, and the Ukrainian Government.

In February 2026, ElevenLabs raised $500 million in a Series D led by Sequoia Capital at an $11 billion valuation, more than tripling its valuation from one year prior.

Google and Amazon both have voice synthesis. OpenAI has audio capabilities. None of them chose to make voice AI the center of their research and product roadmap. ElevenLabs did and then distributed the product free to developers until the usage created its own enterprise demand.

The takeaway: the developer layer is the most efficient distribution channel in software. Bottom-up adoption is slower to start, harder to control, and faster to compound than direct sales. Freemium for developers is the cheapest sales force available.

The Pattern Across All Three AI Startups

Each of these AI startups found the one axis their main competitor couldn't match without hurting itself. Mistral's open source model is incompatible with OpenAI's closed, monetization-dependent architecture. Perplexity's answer-first approach is incompatible with Google's advertising business. ElevenLabs' developer-first free model is incompatible with how Google and Amazon build products around existing enterprise relationships. The sequence in each case was the same:

- Identify the structural constraint. Not a product gap. A move the competitor can't copy without changing who they are.

- Build for the excluded buyer. Mistral built for institutions Google and OpenAI can't serve. Perplexity built for users Google structurally frustrates. ElevenLabs built for developers the big platforms treat as secondary.

- Let distribution compound. Open source code gets forked and embedded everywhere. Free developer tools get integrated into products the builder never anticipated. Quality answers get shared by users who stopped settling for links.

None of this required unlimited resources. It required a clear-eyed analysis of what the giant in the room was prevented from doing by their own success.

What Founders Can Take From These AI Startups Cases?

Most competitive analysis focuses on features. What does the competitor have that you don't? What can you build faster?

These three cases argue for a different question. What is the competitor prevented from doing by their own business model, customer base, or regulatory constraints? That gap is often more durable than any feature advantage because closing it would cost the incumbent more than it gains them.

Mistral's European institutional clients won't switch to a US closed model, because the constraint is structural. Perplexity's users won't go back to links, because they've stopped accepting the friction. ElevenLabs' developer ecosystem won't rebuild from scratch, because switching costs compound every time someone integrates the API into a product. Find the structural gap. Build for the buyer the giant can't reach. Then let compounding do what it does.

FAQ

Why does the developer-first model work better than going enterprise-direct?

Enterprise buyers are risk-averse and slow. Developers are neither. When ElevenLabs gave developers free access to voice cloning tools in 2022, those developers embedded the product into apps, games, content tools, and workflows. By the time enterprise procurement teams started evaluating voice AI, ElevenLabs was already inside their organization through the products their internal teams were using. This bottom-up pattern is harder to compete against than a top-down sale, because switching costs accumulate at every integration point.

Can this "structural gap" approach work for early-stage founders without a big brand or network?

Yes, and it's more accessible to early-stage founders than resource-intensive strategies. A startup competing on features against a well-funded incumbent is fighting on the incumbent's terms. A startup competing on a dimension the incumbent can't address is fighting on its own terms. The analysis required isn't expensive: map what the market leader's business model requires them to do, then identify the buyers left out by those requirements. Mistral did this from a standing start in 2023 with three researchers.

What's the biggest mistake founders make when trying to apply this model?

Confusing a feature gap with a structural gap. A feature gap is something the incumbent could close in the next product cycle. A structural gap is something they can't fix without changing their business model, regulatory position, or core customer relationship. Mistral's open source positioning isn't a feature, OpenAI could theoretically open source their models, but doing so would remove their primary competitive moat. The test is simple: if the incumbent copied your move tomorrow, would it hurt them? If the honest answer is yes, the gap is structural. If not, it's just a feature, and you're in a race you'll eventually lose.

.png)

How Three AI Startups Beat the Giants Without Outspending Them

Discover how three AI startups: Mistral, Perplexity, and ElevenLabs, outsmarted giants like OpenAI, Google, and Amazon. Learn their strategies for revenue-efficient scaling.Read more

The USDC depeg of 2023 had nothing to do with bad reserves. The assets were fine, but they couldn't move fast enough. See why liquidity architecture matters more than what's in the vault.

Stablecoin stability is usually framed as a reserve quality problem. What assets back the token? Are they audited? Are they liquid? These are reasonable questions. They are also incomplete ones.

The deeper issue is speed. Stablecoins create obligations redeemable instantly at any hour, on any day, settled in seconds. The assets backing those obligations operate on a different schedule. Even the safest reserve instruments take time to convert to cash. When redemption demand outpaces available liquidity, the peg breaks, regardless of how sound the underlying assets are.

Key Takeaways

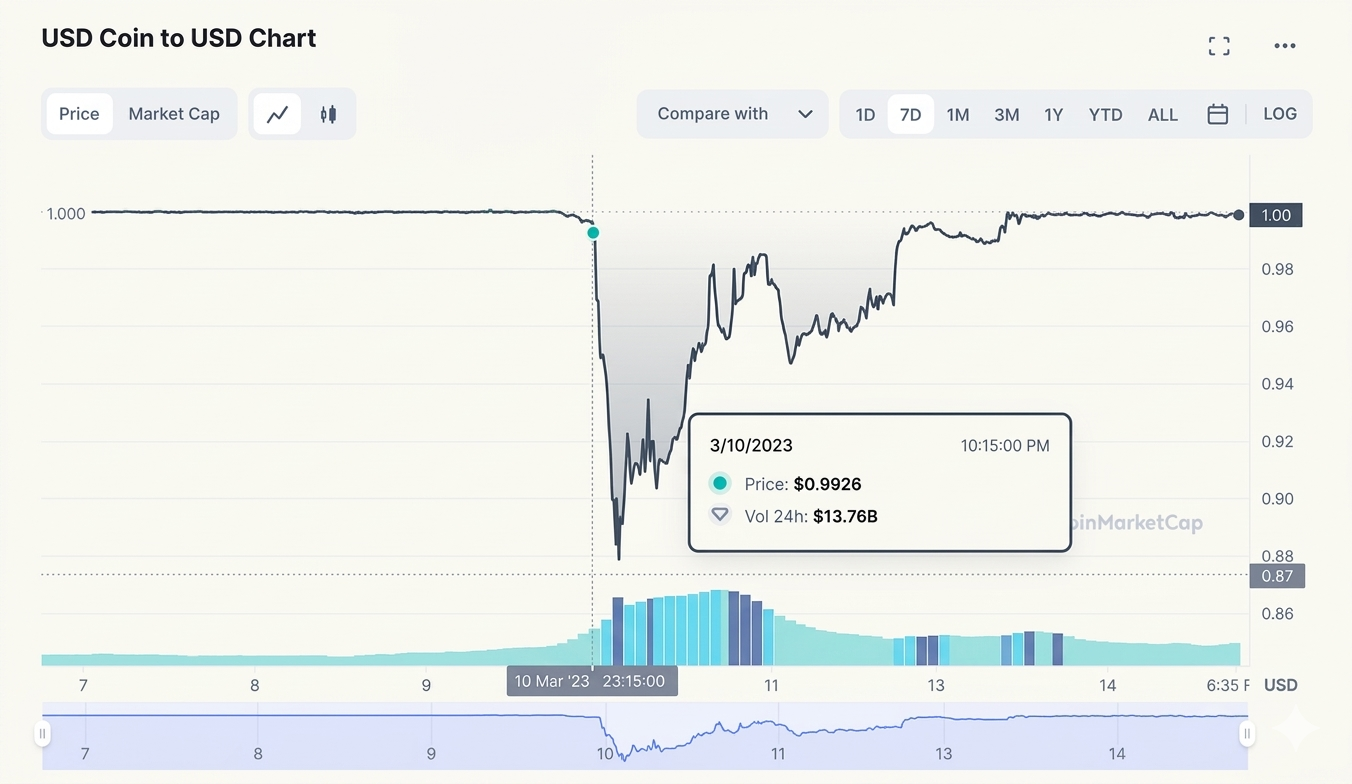

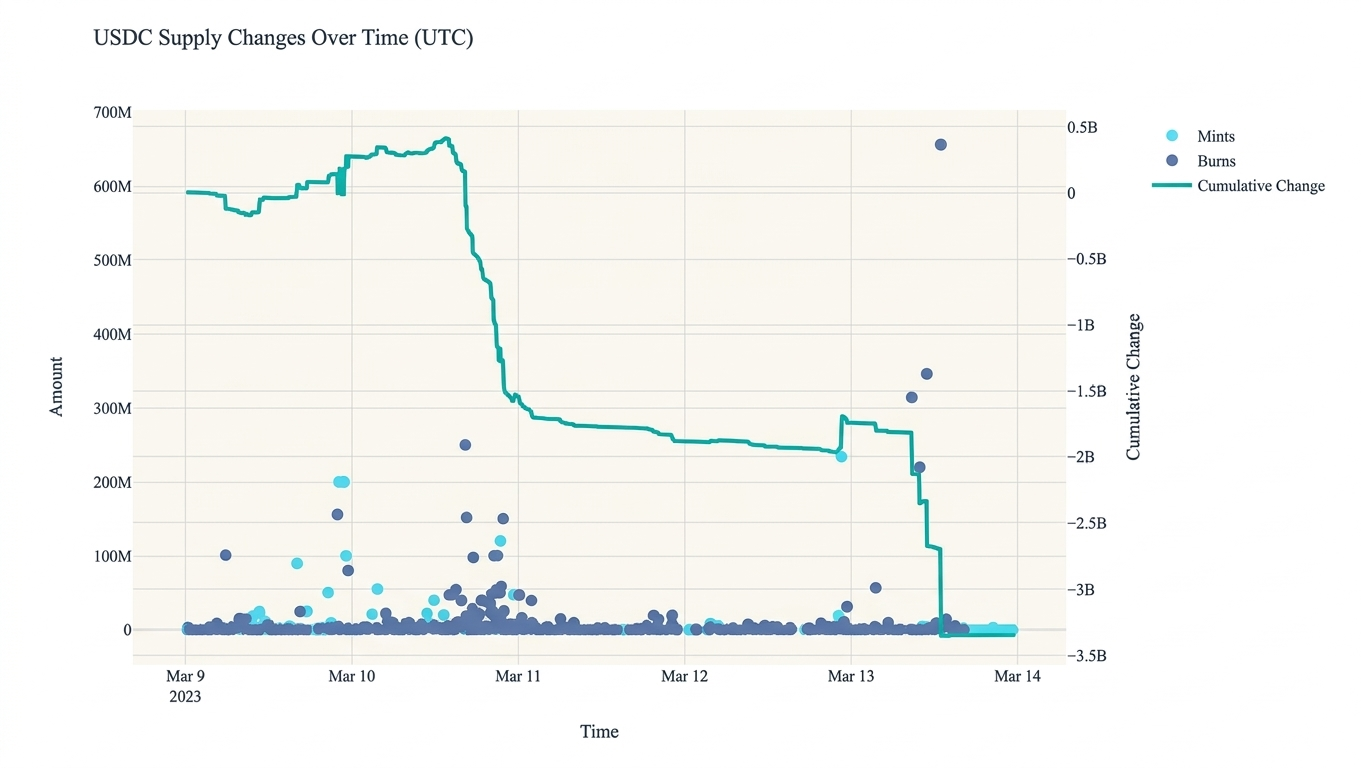

- Stablecoin stability depends on liquidity architecture. High-quality assets, including US Treasury bills, do not guarantee stability if they cannot be converted to cash faster than redemptions arrive. The USDC depeg in March 2023 was caused by $3.3 billion in reserves held at Silicon Valley Bank becoming temporarily inaccessible.

- The liquidity mismatch is structural. Stablecoins carry T+0 liabilities: instant redemption on demand. Most reserve assets settle at T+1 or slower. Depeg events occur when redemption volume exceeds immediately available liquidity.

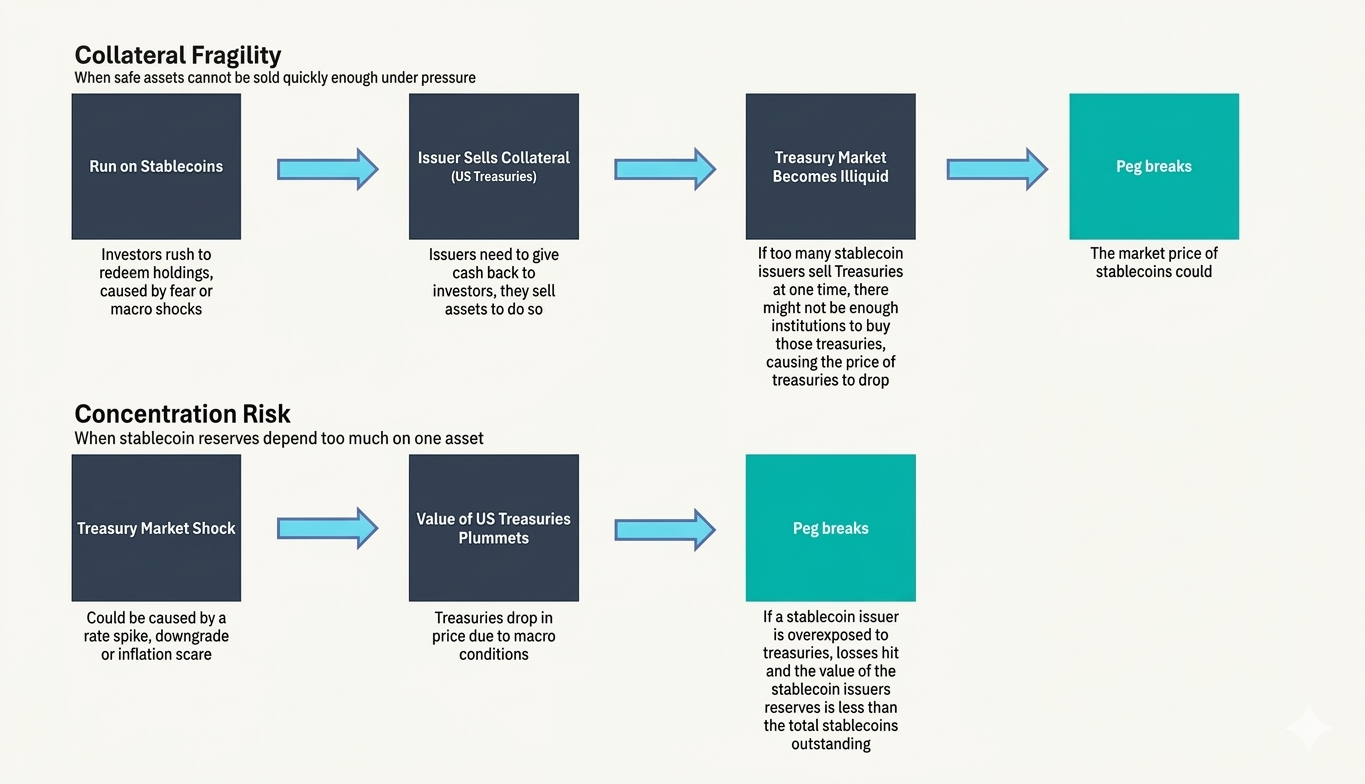

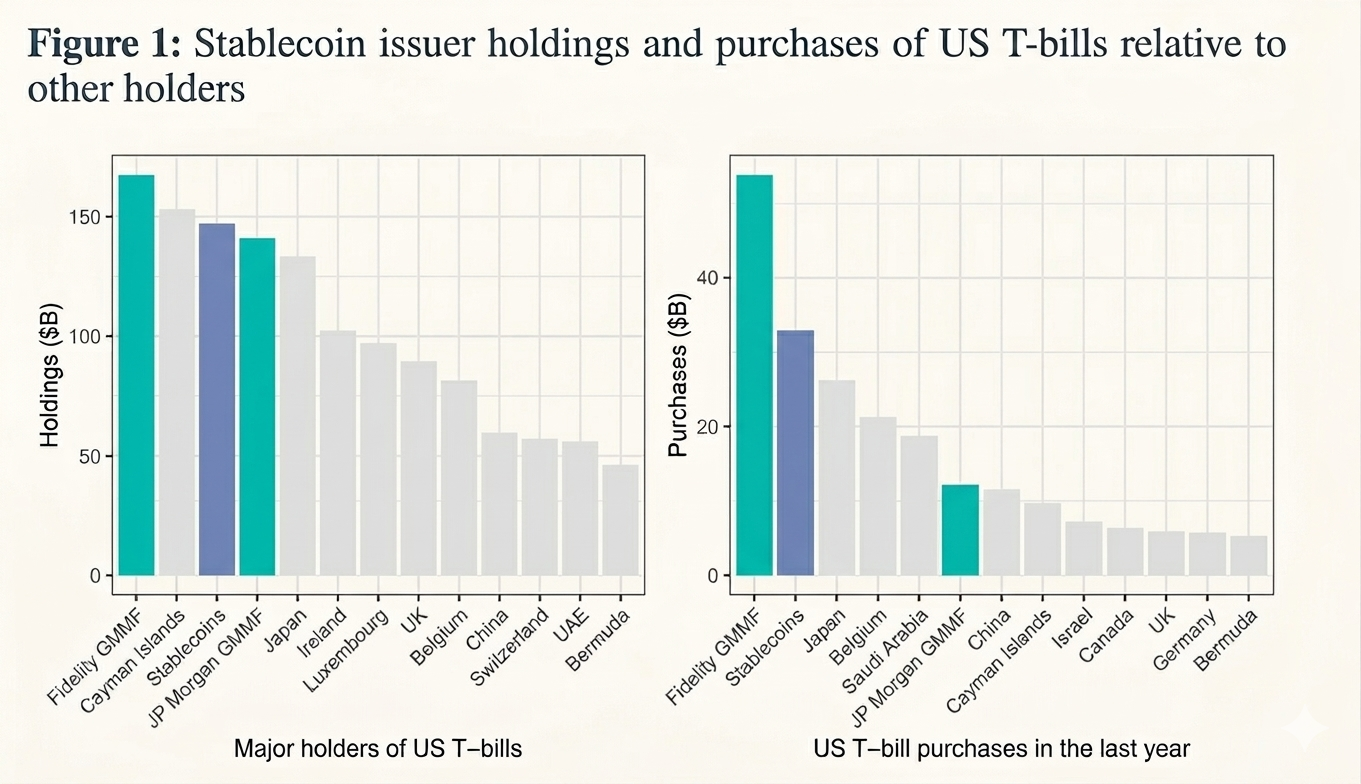

- Concentration in T-bills creates systemic risk at scale. Tether and Circle together hold over $145 billion in US Treasury bills. In a mass-redemption scenario, forced selling could reach approximately 20% of average daily T-bill market volume. The BIS has documented stablecoin reserve flows already suppressing 3-month T-bill yields by 2.5 to 8 basis points depending on market conditions.

- Sound reserve management = balance sheet management. Dynamic rebalancing toward cash as stress indicators rise, diversification across custodians and instruments, and threshold-triggered liquidity policies separate robust reserve management from the alternative. Stablecoin issuers at scale are operating something functionally similar to narrow banks or money market funds and need to manage reserves accordingly.

Case 1: The USDC Depeg During the Silicon Valley Bank Collapse

In March 2023, USD Coin, one of the largest dollar-backed stablecoins, temporarily lost its peg. Approximately $3.3 billion of Circle's reserves sat in Silicon Valley Bank when regulators shut it down.

Once Circle disclosed the exposure, three things happened in rapid sequence: USDC fell below $1 on secondary markets, mass redemptions began, and the broader market started questioning whether the reserves were actually accessible. The peg recovered fully once US regulators guaranteed all SVB depositors, but the recovery required government intervention.

The important distinction here is between reserve existence and reserve accessibility. The $3.3 billion was temporarily frozen and for a system built on instant redemption, temporarily frozen is functionally the same as gone.

This episode was one of the first times the market saw clearly: stablecoin stability depends on how fast those reserves can actually reach a holder requesting redemption.

Case 2: Stablecoins and the US Treasury Market

The second case is less dramatic, but more significant at scale.

Tether and Circle, the two largest stablecoin issuers, invest a substantial portion of reserves in US Treasury bills. Together, their T-bill holdings exceed $145 billion. The logic is sound: short-duration sovereign debt is liquid, low-risk, and consistent with regulatory expectations taking shape in major markets.

The scale creates a new problem. In a stress scenario: mass redemptions across major stablecoins, issuers become forced sellers. Research estimates forced selling could reach approximately 20% of average daily T-bill trading volume. This is the fire-sale risk: rapid asset sales placing downward pressure on prices, creating losses for sellers and potentially spreading instability beyond the stablecoin market itself.

The BIS has documented the existing footprint. Sustained stablecoin inflows into T-bills suppress 3-month yields by roughly 2.5 to 3.5 basis points under normal conditions and up to 5 to 8 basis points during liquidity shortages. Stablecoin issuers are already large, permanent participants in the US government debt market.

The paradox is worth naming directly: the most reliable reserve assets can generate systemic risk when used to back obligations with instant redemption. High-quality collateral does not neutralize fire-sale risk. At sufficient scale, it creates it.

The Common Problem Both Cases Reveal

On the surface, these two cases look different. One involves inaccessible bank deposits, the other – potential forced selling of Treasury bills. The underlying architecture problem is identical.

Stablecoins create liabilities redeemable instantly and continuously: T+0. The assets backing those liabilities, even the safest ones, convert to liquidity more slowly and depend on the infrastructure of traditional financial markets: settlement windows, banking hours, counterparty availability. This gap between the speed of obligations and the speed of reserve liquidity is the structural mismatch at the core of every fiat-backed stablecoin.

As the stablecoin market grows, this mismatch stops being purely an internal issuer problem. It starts affecting traditional financial markets. With more than $145 billion in T-bill holdings, stablecoin issuers influence the pricing of one of the world's most important financial instruments. In a stress scenario, the liquidity problem at the issuer level becomes a volatility problem at the market level.

The reserve liquidity problem therefore operates on two levels simultaneously: for individual issuers, it is the risk of failing to meet instant redemptions; for the financial system, it is a potential source of pressure on sovereign debt markets when mass redemptions force rapid asset sales.

What Economic Models of Reserve Management Show

Academic models of stablecoin reserve management treat the issuer as a system permanently balancing two objectives: maximizing yield on reserves and maintaining sufficient instant liquidity for redemptions.

The key finding from these models: depeg events occur when redemption volume exceeds immediately available liquidity. A stablecoin can be fully solvent in aggregate and still fail to meet redemption demand in real time. Solvency and liquidity are different conditions, and only one of them matters in the moment a holder wants out. Three additional insights emerge consistently.

- Redemption flows cluster. One wave of redemptions raises the probability of the next, because each round signals potential instability to remaining holders. The self-reinforcing nature of redemption waves means the gap between a manageable outflow and a crisis can close faster than static reserve buffers anticipate.

- Average-day liquidity is the wrong target. Reserve management calibrated to typical daily flows will fail under the specific conditions most likely to produce a crisis. Optimal strategies need to be stress-tested against tail scenarios.

- Threshold policies outperform static allocations. The most effective reserve management approaches operate with trigger logic: during calm conditions, hold a higher share of yield-generating assets; when stress indicators cross a defined threshold, shift rapidly toward cash. The speed of rebalancing matters as much as its direction.

Practical Principles for Reserve Management

From the case analysis and economic modeling, five principles emerge for reserve management built around liquidity rather than quality alone.

Dynamic rebalancing

Reserve composition should not be static. During calm periods, a higher allocation to yield-bearing assets makes sense. When stress signals appear, rising redemption volumes, market volatility, counterparty risk indicators, the cash share needs to increase quickly. A reserve portfolio optimized for normal conditions and held unchanged through stress is a liability.

Threshold liquidity policy

The most effective approach is explicitly conditional: if stress signals remain low, portfolio structure stays unchanged; if risk crosses a defined level, liquidity increases sharply. This threshold logic prevents unnecessary yield sacrifice during calm periods while ensuring rapid response when it matters.

Diversification across instruments, custodians, and counterparties

The SVB episode illustrated custodian concentration risk directly. No single bank, T-bill maturity, or custody arrangement should represent a concentration severe enough to impair instant liquidity if it becomes unavailable. Diversification in this context is about eliminating single points of failure in the path from reserve asset to redeemed token.

Managing redemption flows directly

Some models propose using variable fees on token creation and redemption as a stabilization tool. These fees can slow redemption pressure during stress and buy time for orderly asset liquidation. The tradeoff is user experience; stablecoins built on frictionless redemption may resist fees as a design compromise, even when they serve a genuine risk management function.

Treating transparency as a two-edged instrument

This is the principle most often misunderstood. Reserve transparency builds confidence when market trust is already high, holders who can verify reserve quality feel less pressure to redeem preemptively. When confidence is fragile, the same disclosures can function as coordination signals. A holder reading a report showing concentration in a specific institution can update negatively and act, and doing so publicly may trigger others to follow. The disclosure meant to reassure becomes the focal point around which a redemption wave organizes. This is the paradox of transparency: its effect depends entirely on the level of trust and market expectations at the time of disclosure.

Non-USD Stablecoins and the Currency Dimension

One underappreciated aspect of the reserve liquidity problem is how it compounds for non-USD stablecoins.

Euro-denominated stablecoins remain a fraction of the overall market, the ECB places their circulation in the hundreds of millions. But the structural problem is worse for them. Euro-denominated reserve assets are less liquid in stress conditions, the secondary markets for euro-pegged tokens are thinner, and the regulatory frameworks are newer and less tested.

JD.com and Ant Group have reportedly lobbied for an offshore yuan stablecoin in Hong Kong, partly as a counter to growing dollar dominance in digital payments. If those projects proceed at scale, they will inherit the same liquidity mismatch between T+0 liabilities and slower-settling reserve assets but in a currency and regulatory environment with even less institutional infrastructure for managing it.

The dollar dominance of the stablecoin market is often framed as a geopolitical fact or a network effect story. It is also, partly, a liquidity fact: dollar-denominated reserves are more liquid, more widely traded, and more quickly convertible in stress than alternatives. For non-USD issuers building at scale, closing the gap on reserve liquidity is not optional.

The Regulatory Dimension in 2026

The GENIUS Act, signed into US law in July 2025, requires stablecoin reserves to be backed with liquid assets, US dollars, and short-term Treasuries, along with reserve disclosure requirements. MiCA in Europe establishes parallel authorization requirements for asset-referenced and e-money tokens.

Both frameworks represent meaningful progress, particularly in improving the quality and transparency of reserves. However, they stop short of addressing a more fundamental issue: liquidity mismatch.

As Anastasia Zenina, Strategic Consultant at Mezen, puts it, his mismatch is structural. Even high-quality, liquid assets can become a source of instability when they are held at scale against liabilities that can be redeemed instantly (T+0). In stress scenarios, this dynamic can trigger fire-sale risks, regardless of how safe the underlying assets are. Regulatory clarity on reserve composition is necessary, but it is not sufficient.

Recent signals from policymakers reinforce this concern. In March 2026, the European Central Bank (ECB) warned that the rapid growth of stablecoins could weaken monetary policy transmission and pull deposits away from traditional banks. This shifts the conversation toward a more systemic question: what happens when large-scale redemptions occur and how do those effects propagate through the financial system?

As stablecoins continue to grow, their reserves, often concentrated in instruments like Treasury bills, become increasingly intertwined with traditional financial markets. Large-scale redemptions would no longer be contained within the crypto ecosystem; they could exert direct pressure on core funding markets. The key risk is no longer just redemption itself, but how that stress spreads across the system.

From this perspective, tokenized deposits offer a structurally different approach. Rather than relying on external reserve assets, they represent traditional bank deposits on-chain. This eliminates the need to liquidate assets to meet redemptions.

Liquidity, in this model, already resides within the banking system, supported by central bank money and existing payment infrastructure. Instead of managing a mismatch, the model largely avoids it altogether.

This distinction becomes even more critical outside U.S. dollar markets, where liquidity is typically thinner and more fragile under stress. Over time, the competitive landscape may shift accordingly, not between individual stablecoins, but between two fundamentally different models:

- Asset-backed tokens, dependent on external reserves

- Bank-integrated tokens, embedded within the traditional financial system

The long-term outcome will likely depend on which model proves more resilient under real-world stress.

The Core Insight

Stablecoin stability is a liquidity problem. The assets can be excellent and the architecture can still fail, as happened to USDC in 2023 in a relatively mild scenario. At $300 billion in market cap and growing, the stakes for getting this right extend well beyond any individual issuer.

The projects and institutions approaching stablecoin reserve management with the seriousness it requires, building dynamic liquidity buffers, diversifying across custodians, stress-testing for correlated tail scenarios , are building systems with a plausible chance of surviving the conditions most likely to test them.

Everyone else is holding high-quality assets and hoping the gap between T+0 liabilities and T+1 settlement never becomes visible at the wrong moment. It will.

Frequently Asked Questions

What is the liquidity mismatch in stablecoin reserves?

The liquidity mismatch is the gap between the speed of stablecoin liabilities and the speed of reserve assets. Stablecoins promise instant redemption, T+0 settlement, at any hour. The assets backing those redemptions, even conservative ones like US Treasury bills, settle at T+1 or require active secondary markets to liquidate quickly. This mismatch is structural: it exists in every fiat-backed stablecoin design and cannot be removed without either slowing redemptions or holding only central bank reserves. The practical consequence is a stablecoin can be fully solvent on paper and still fail to meet redemption demand in real time if liquidity is concentrated in assets too slow to convert.

Why did USDC lose its peg in March 2023 if the reserves were legitimate?

Circle held approximately $3.3 billion of USDC reserves at Silicon Valley Bank when regulators shut the bank on March 10, 2023. The funds were frozen pending resolution proceedings. For a stablecoin requiring instant redemption, temporarily inaccessible reserves produce the same immediate effect as insufficient reserves: the issuer cannot honor redemptions at par, secondary market prices fall below $1, and a redemption wave follows. USDC recovered fully once US regulators guaranteed all depositors, but the episode showed reserve location and custodian risk matter as much as reserve quality.

How large is the stablecoin market's exposure to US Treasury bills, and why does it matter?

Tether and Circle together hold over $145 billion in US Treasury bills as of early 2026, making stablecoin issuers among the largest non-government buyers of short-duration US sovereign debt. Under normal conditions, BIS research shows stablecoin inflows into T-bills suppress 3-month yields by 2.5 to 3.5 basis points. During liquidity shortages, the effect reaches 5 to 8 basis points. In a stress scenario involving mass redemptions across major stablecoins, forced selling of T-bills could reach approximately 20% of average daily market volume, enough to move prices materially. The assets considered safest for reserves are also the assets whose forced sale creates the most systemic pressure.

What is the paradox of transparency in stablecoin reserve management?

Reserve transparency is generally positive for stablecoin stability when market confidence is high, holders who can verify reserve quality feel less pressure to redeem preemptively. The paradox arises when confidence is already fragile. In low-trust conditions, detailed real-time reserve disclosures can function as coordination signals: a holder reading a report showing concentration in a specific institution can update negatively and act. If enough holders do so simultaneously, the disclosure meant to prevent a run can accelerate one. This does not argue against transparency; opacity creates worse long-run risks, but it means transparency should be paired with robust liquidity architecture rather than treated as a substitute for it.

What reserve management practices reduce stablecoin run risk in practice?

Four practices emerge consistently from economic modeling and case analysis. First, dynamic rebalancing: reserve composition should shift toward cash as stress indicators rise. Second, custodian and instrument diversification: no single bank, T-bill maturity, or custody arrangement should represent a concentration severe enough to impair instant liquidity if it becomes unavailable. Third, stress-scenario calibration: reserve buffers should be sized for correlated tail scenarios, simultaneous redemption pressure, and market disruption. Fourth, treating reserve management as a liability-driven investment problem: stablecoin issuers at scale face the same structural challenges as narrow banks and money market funds and benefit from applying the same frameworks, including liquidity coverage ratios and formal stress testing.

.png)

Stablecoin Reserve Liquidity: Why Asset Quality Is Only Half the Story

Explore why high-quality stablecoin reserves failed USDC in 2023 and why liquidity speed is the real measure of crypto stability.Read more

For most institutional teams, the first question about stablecoins isn’t what they are. It’s something much more practical:

Why would we issue one, and where does the value actually come from?

In conversations with banks and payment companies, the discussion rarely starts with blockchain infrastructure or token standards. It usually begins with economics.

Can this improve how we move liquidity? Can it reduce transaction costs? Can it help us retain revenue that currently leaks into correspondent banking layers?

Stablecoins are often presented as a technological upgrade. But for financial institutions, they are better understood as a change in how settlement works and who captures value in the process.

Same payment flows, different constraints

Banks and payment companies operate across nearly identical transaction flows. Both move money across borders. Both manage FX exposure. Both maintain liquidity buffers to support card settlement, merchant payouts, or real-time transfers.

But they do not operate under the same regulatory permissions.

In most jurisdictions, payment companies cannot earn interest on customer funds. That restriction fundamentally shapes how they think about infrastructure.

Launching a stablecoin allows a payment company to capture value from balances that would otherwise remain idle in segregated accounts. In practice, this creates a programmable internal settlement layer that helps manage liquidity across operational flows without relying entirely on banking partners.

For banks, however, the case is less obvious.

Banks already monetize deposits. The upside of stablecoins is not necessarily yield, but rather operational efficiency. In particular, the ability to:

- reduce settlement costs in cross-border transfers

- improve liquidity mobility across jurisdictions

- retain FX-related fee income

- simplify reconciliation across payment systems

In that sense, stablecoins are less about earning more and more about losing less.

Where the economics actually change

A significant share of fee income for retail and SME-focused banks comes from international transfers and FX spreads. These flows typically depend on intermediary banks, card networks, and clearing systems.

Routing and reconciliation are distributed across multiple institutions. Liquidity is often only available after settlement. In some cases, post-settlement matching is required across different systems.

Stablecoin-based settlement changes this dynamic.

Instead of executing pricing logic and reconciliation across separate banking entities, transaction value and transaction information can move together and settle atomically. FX conversion, execution rules, and settlement logic can be embedded directly into the transfer layer.

In practical terms, this reduces:

- reconciliation overhead

- compliance duplication

- transaction routing costs

- operational exceptions

Particularly in high-volume cross-border corridors.

Removing correspondent banking layers from international transfers improves transaction economics. Under conservative assumptions, this can translate into higher throughput, retained FX spreads, and improved capital efficiency — especially for institutions whose income is strongly tied to payment activity.

Stablecoins as a treasury instrument

Beyond payments, stablecoins introduce a different approach to treasury management

Most internationally active banks operate across multiple jurisdictions and maintain liquidity in geographically distributed correspondent accounts. Funds allocated for day-to-day operations often sit idle in fiat accounts. Not because they are unused, but because they must remain accessible in specific markets.

This fragmentation becomes especially visible during periods of stress or unexpected outflows. Moving liquidity across entities may take a full banking day. It may take even longer if initiated before weekends or holidays.

Settlement lag introduces opportunity cost. In some cases, it also introduces liquidity risk.

Blockchain-based treasury rails remove geographic fragmentation by allowing liquidity to move continuously, settle instantly, and be reallocated dynamically across operational flows.

This becomes particularly relevant for institutions managing international payments or card-linked settlement, where parallel fiat and crypto liquidity buffers must coexist.

Incorrectly balancing between highly liquid operational funds, yield-generating reserves, and instant settlement buffers can result in missed yield opportunities or forced liquidation losses.

Which makes treasury design a central, rather than auxiliary, component of any stablecoin issuance strategy.

Issuance is the easy part

Launching a stablecoin is only the beginning.

Achieving operational viability requires integration with custody providers, exchange infrastructure, wallet networks, and merchant systems.

In many cases, custody integration introduces trade-offs between internal infrastructure control and reliance on third-party providers — each with their own compliance requirements.

Transaction screening across multi-hop wallet chains remains computationally expensive.

As transaction volume increases, so does the probability of exposure to flagged funds. This increases the operational overhead associated with managing that exposure.

Enabling stablecoin acceptance across merchant networks or brokerage platforms often requires both legal onboarding and technical integration. Even when an institution can receive stablecoins, it must still convert, route, or deploy them into downstream financial workflows.

Settlement is no longer the final step. It becomes part of the infrastructure.

A structural shift in settlement ownership

Perhaps the most significant implication of stablecoins is structural rather than operational.

When settlement moves outside the banking system, payment rules, pricing logic, compliance enforcement, and reconciliation can be executed at the infrastructure layer — rather than within bank-led transaction routing.

Over time, this may shift FX pricing control, transaction fee capture, and reconciliation logic away from financial institutions and toward programmable settlement networks. Which turns stablecoin adoption from an efficiency decision into a strategic positioning question.

Where stablecoins fit today

Stablecoins are not a replacement for existing financial infrastructure.

They are increasingly used alongside it in:

- cross-border payments

- treasury mobility

- FX settlement

- card-linked payment flows

Issuance is the entry point.

In practice, banks and payment companies use stablecoins not as a new asset class, but as an additional settlement and liquidity layer that sits alongside fiat infrastructure.

In payment workflows, they can reduce routing costs and improve transaction speed in cross-border transfers.

In treasury operations, they enable liquidity to move across jurisdictions without relying on correspondent banking networks.

In FX settlement, they allow value to move together with transaction logic, reducing reconciliation overhead and execution delays.

Their role is not to replace existing systems but to improve how liquidity is allocated, moved, and settled across institutional financial operations.

.png)

Stablecoin 101

Why banks and payment companies are exploring stablecoins and how they can improve cross-border payments, treasury mobility, and FX economics.Read more

.png)